The black box problem

Everyone knows prompts matter. You’ve seen the Twitter threads. The Reddit posts. The “here’s my secret formula” guides. I’ve read most of them, and I actually learned from them. Step-by-step instructions instead of vague goals. A few examples so the model knows what you expect. A persona if it helps frame the task. These techniques are real and I use them daily.

But I was always missing something: I had no idea if I was getting better.

When you cook a meal, you taste it. When you write code, tests tell you if it works. When you write a prompt, you get… one output. Maybe a few if you’re being thorough. And then you make a judgment call based on vibes.

I’d tweak a prompt, run it, and think “that seems… better?” But I couldn’t be sure. Maybe it was better for this specific input and worse for everything else. Maybe I just got lucky. Maybe the change I made didn’t matter at all.

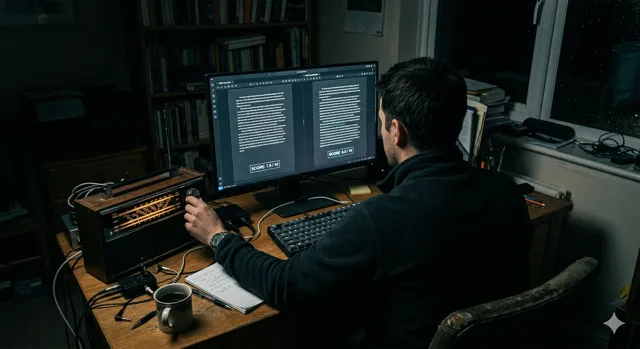

It felt like tuning a radio by ear in a dark room. You move the dial and hope you hit something good. I kept following best practices because they came from people who seemed to know what they were doing. But I never had a way to verify any of it actually worked.

What actually changed my thinking

I was reading through Anthropic’s documentation recently — properly this time, not just skimming — and I hit something that stopped me: prompt evaluation.

The concept isn’t complicated. But I’d never thought about prompting this way.

You start with a prompt, call it version one. Then you build a grader. Could be a script that checks outputs against a checklist, or another AI model that scores them. The point is: you have a repeatable way to measure quality.

Then you generate test cases. Not one or two. Ten inputs that represent the range of things your prompt needs to handle. You run all ten through your prompt, collect the outputs, and feed them to the grader. You get a score.

Now you have a baseline. Version one: 6.2 out of 10, or 4 out of 10 test cases pass, or whatever your grader measures.

Then you make a change. Maybe you add a few-shot example, or rewrite the instructions to be more specific. You run the same ten test cases through the updated prompt, feed the outputs to the same grader, and get a new score.

Version two: 7.4 out of 10. You improved. By exactly that much. And you know why, because you only changed one thing.

Or version two: 5.8. You got worse. You find out immediately, revert, and try something else.

The ceiling you don’t notice

Before this, I was doing prompt engineering the way most people do it: accumulating techniques, applying them based on intuition, hoping the result was good. Experience matters, but it has a ceiling.

The ceiling is that you’re always working from feel. You can’t tell the difference between a prompt that’s genuinely good and a prompt that happened to produce a nice output on the one input you tested it with.

Evaluation changes the conversation from “I think this is better” to “this scored 23% higher across ten test cases.” One is a guess. The other is a measurement.

It also changes what prompt engineering even means. Instead of memorizing tips, you’re running experiments. You have a hypothesis: “adding examples will help the model understand the format I want.” You test it across a set of real inputs and see what the numbers say.

That’s a different activity from scrolling Twitter for prompting tricks and hoping they transfer to your use case. It’s also the missing piece I was looking for when I wrote about how different AI models handle the same prompts differently — evaluation would have told me exactly how much each model’s output varied, instead of me going on feel.

Where I’m starting

I’m still early in applying this properly. Building a good test set takes work, and designing a grader that measures what you actually care about is harder than it sounds. Building one that doesn’t just reward outputs that look good — but actually are good — is a whole other problem.

But even a rough version of this, ten test inputs and a simple rubric, is more information than I had before. Right now that’s enough to start.

I spent months collecting prompting techniques without any way to tell if they were working. Turns out the missing skill wasn’t better prompts — it was measuring the ones I already had.