What I thought I knew

Before this week, my mental model of AI pricing was simple. You pay per token. Input tokens cost some amount, output tokens cost more, you add them up. System prompt counts as input. Tool definitions count as input. Every conversation starts from zero and you pay for every token you send.

Predictable. Also wrong, or at least not the whole picture.

I was reading the Anthropic docs properly this time and ran into a feature called prompt caching. It changed how I think about agent workflow design.

The default cost model

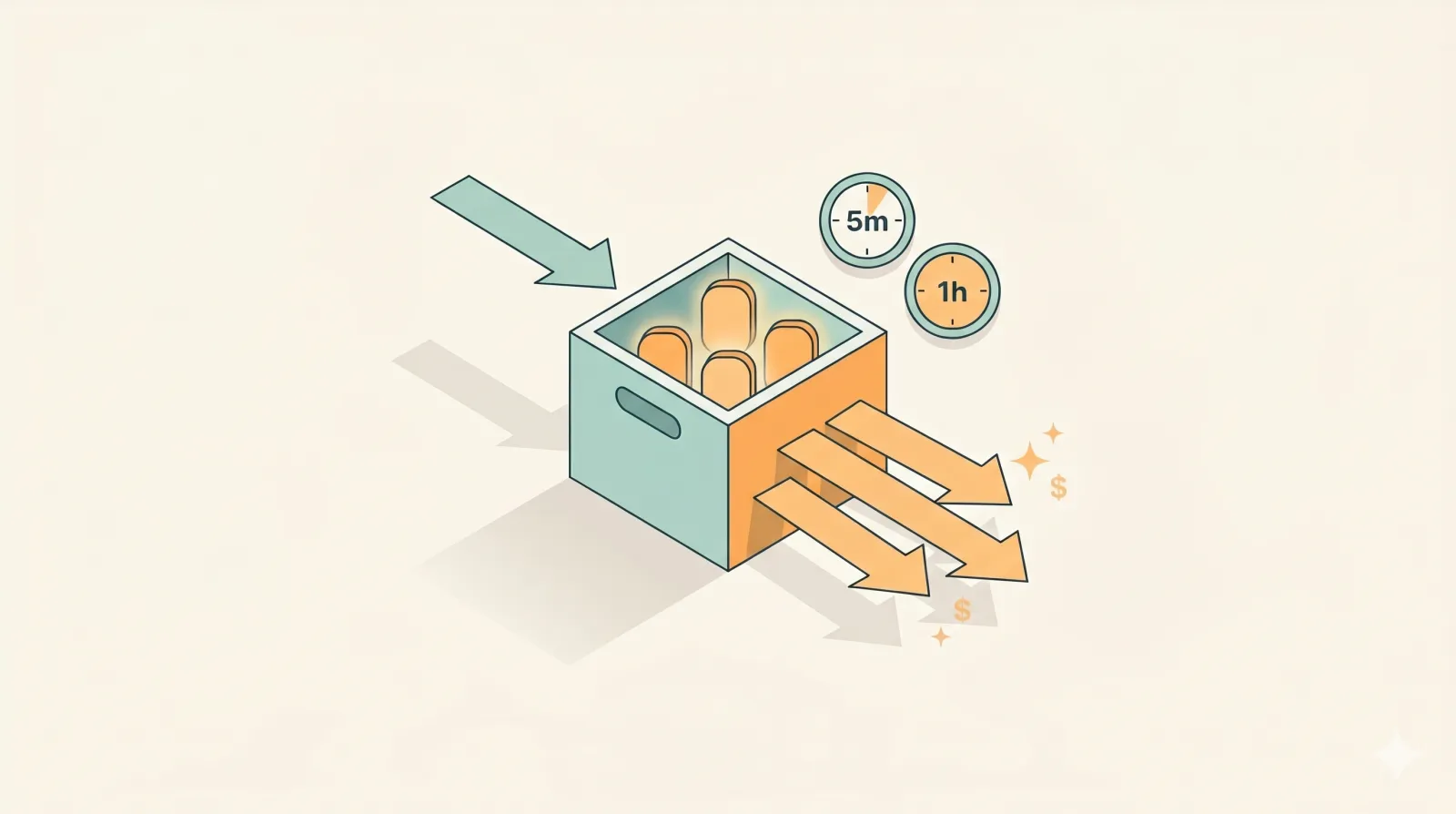

Without caching, every call pays full price for everything you send. Long system prompt? Pay for it. Tool schemas? Pay for them. The 50-message conversation history? Pay for the whole thing on every turn.

For a one-shot chat that’s fine. For an agent that calls the model hundreds of times with the same system prompt and tool definitions? You’re paying for the same tokens over and over.

What caching changes

Anthropic gives you two cache TTLs: 5 minutes and 1 hour.

- 5-minute cache: write costs 1.25x the base input rate. Reads cost about a tenth of base.

- 1-hour cache: write costs 2x the base input rate. Reads still cost about a tenth of base.

The shape is: pay a small premium once to write something into the cache. Every later call within the TTL that matches the cached prefix pays the read price, which is a fraction of the normal input cost.

So if you have a 10k-token system prompt that gets reused 100 times in an hour, you pay the 2x premium once instead of full price 100 times. The math swings hard in your favor.

What this actually unlocks

This is the part that flipped a switch for me. The question stops being “how do I write a shorter prompt to save money” and becomes “what parts of my prompt are stable enough to cache.”

Good cache candidates:

- Long system prompts with detailed instructions

- Agent rules, persona definitions, output format specs

- Tool and function schemas

- Reference documents the agent needs on every call

- Few-shot examples

Bad cache candidates:

- The current user message

- Anything that changes every call

- Short prompts where the write premium isn’t worth it

The split matters. You’re designing your prompt in two layers now: the stable layer that goes in the cache, and the changing layer that doesn’t.

Picking the TTL

5 minutes vs 1 hour comes down to how often you reuse the content.

If you’re running an agent loop that fires every few seconds, 5 minutes is fine and the write premium is lower. If you have a system prompt that gets reused across user sessions throughout the day, 1 hour pays back the higher write cost because you avoid re-writing the cache as often.

Get this wrong in either direction and you lose money. Pick 1 hour for something that only gets reused twice and you paid 2x for nothing. Pick 5 minutes for something reused all day and you keep paying the write premium every time the cache expires.

The trap

The write premium is real. You can’t just dump everything into the cache and call it a day. If you cache content that only gets used once, you paid extra for no benefit. If you cache something that changes every call, the cache invalidates and you pay the write cost over and over.

Caching is a design decision, not a flag. You have to look at your workflow and decide which parts are genuinely stable, how often they get reused, and which TTL fits the pattern.

Where I’m landing

I came in thinking prompt caching was a small optimization knob. It’s not. For any agent workflow that calls the model repeatedly with shared context, this changes the cost structure. Big multiples of savings, not single-digit trims.

There’s more to dig into. Cache hit rates, prefix matching, structuring messages so the cache key stays stable. I’m still early on all of it. But even the basic version, “put the stable stuff in the cache, keep the changing stuff out,” cuts costs on anything that runs at scale.

For companies running AI agent workflows in production, this is the kind of thing that decides whether the unit economics work.

Thought I understood AI pricing. One feature in the docs and my whole mental model needed an update.