The part that keeps clicking

I finished my first RAG chatbot a week ago. Since then I can’t stop seeing embeddings everywhere.

The project itself was small. Documents in, chunks out, embed, store, retrieve, generate. Nothing fancy. But the idea behind it? That’s been rewiring how I understand a bunch of products I’ve used for years without really getting how they worked.

The core idea, one more time

Embedding search is simple once it clicks. You take any piece of content and convert it into a vector. A list of numbers that represents the meaning. You do the same thing with a query. Then you compare vectors and return whatever is closest.

That’s it. No keyword matching. No string scanning. You’re just measuring distance in a space where similar meanings end up near each other.

The kicker: the content doesn’t have to be text.

Google Photos, finally making sense

I’ve used Google Photos for years. I type “dog” and it shows me my dog. I type “beach” and it shows me beach photos. I assumed there was some classifier running in the background, tagging everything with labels.

That’s not wrong, but it’s not the full picture. What actually happens is closer to this: every photo gets embedded into a vector that captures what’s in the image. When I search “dog,” the word gets embedded into the same space. The app returns photos whose vectors are closest to my query vector.

This is why searching “animal” also surfaces dog pictures. The word “animal” was never attached to the photo. But its meaning sits close to “dog” in the vector space, and the photo of my dog sits close to both. Similarity wins.

I used this feature for years and had no mental model for it. Now I do.

Recommendations are the same trick

Same thing with YouTube or Spotify or Netflix. I click a song I like. The recommender doesn’t just look at the genre tag. It looks at a vector that represents the feel of the track. Tempo, key, instrumentation, mood, everything rolled into one embedding. Then it finds tracks whose vectors are nearby and queues them up.

Movie search works the same way. Imagine trying to find Titanic without remembering the name. You type: “old romantic movie, big ship, hits an iceberg, the girl survives.” None of those words might be in the movie’s title or tagline. But if the movie’s description and metadata got embedded properly, your query embedding lands near Titanic’s embedding, and it surfaces.

You stop needing the exact words. You need the meaning to be close. That’s a totally different kind of search.

Coding agents work this way too

This one surprised me the most. I kept wondering how tools like Cursor manage to find the right code in a large project without reading every file. The answer is the same trick.

When you open a codebase, the agent chunks your code and embeds each chunk into a vector database. Now your whole repo lives as vectors. When you ask it to “add a login button,” it embeds your request and searches for the closest chunks. Probably the existing login component, the auth hook, the routing file that handles the session.

Before generating anything, the agent has already narrowed the context to the few places that matter. It didn’t grep for “login.” It searched for meaning. That’s why it can find relevant code even when your wording doesn’t match the variable names.

This also explains why these agents scale. You can’t fit a real codebase in a context window. But you can fit a few retrieved chunks. That’s what embedding search buys you.

The idea that connects everything

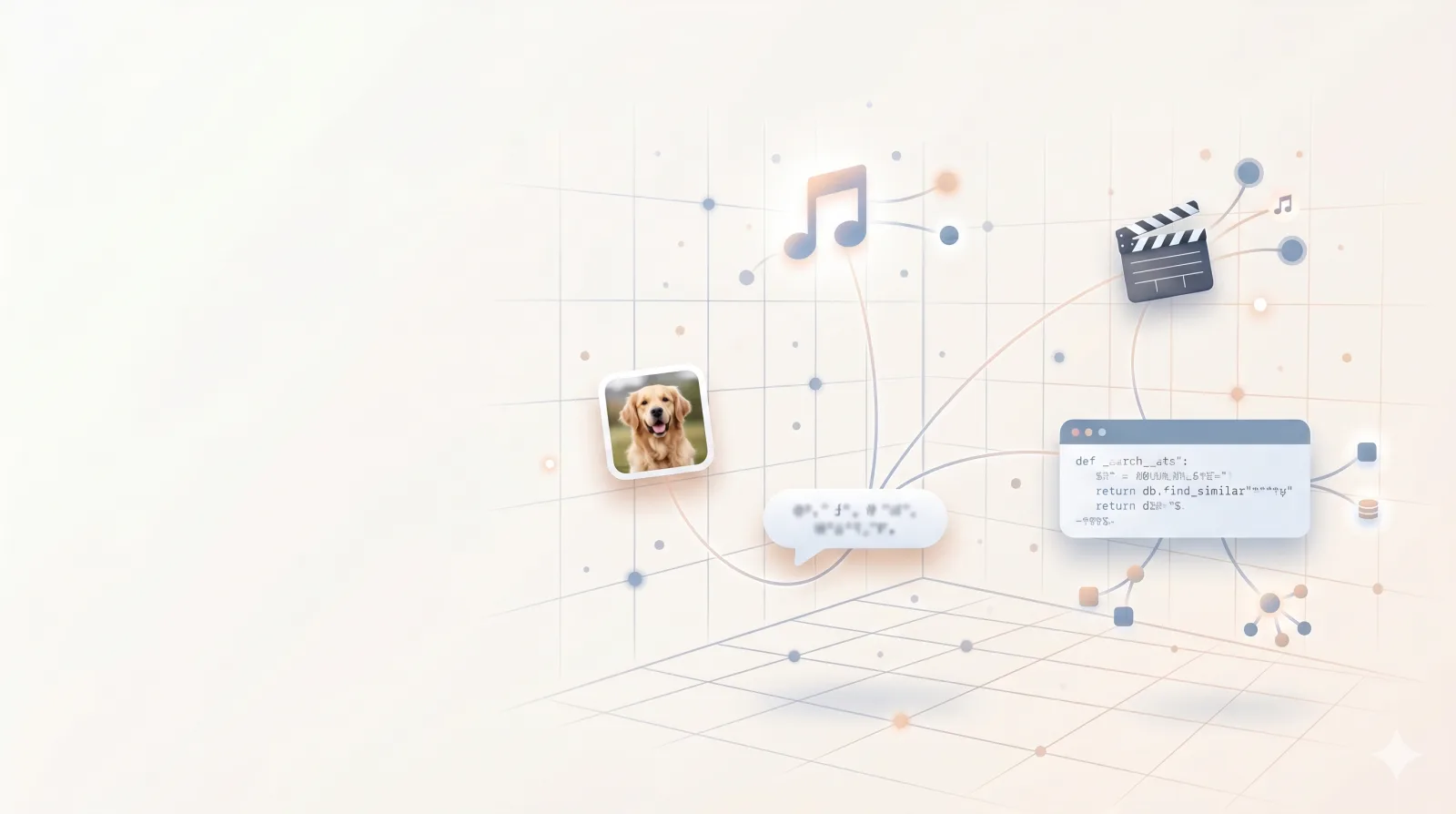

Here’s what I keep coming back to. The same mechanism powers photo search, music recommendations, movie discovery, RAG chatbots, and coding agents. Different content types, different products, same underlying move.

- Take the content. Embed it into a vector.

- Store the vectors.

- Take the query. Embed it the same way.

- Return whatever is closest.

That’s the whole shape. I missed it for years.

Why this felt like an unlock

I built one small RAG chatbot. That project alone didn’t teach me anything about images or music or code. But it taught me the pattern. And the pattern shows up in places I’d been using for years without understanding them.

That’s the part I wasn’t expecting. A simple side project pulled a bunch of loose concepts into a single frame. Recommendation systems, semantic photo search, how coding agents scale to huge repos. Things I would have treated as separate topics a month ago now feel like one topic wearing different outfits.

This is why I keep building small things. You don’t always know what you’re going to learn until the pieces start connecting.

One small chatbot, and suddenly a bunch of products I’ve used for years made sense. Embeddings were hiding in plain sight.